NSF CAREER Smart Learning in Multi-person VR

Immersive multi-person virtual reality research using multimodal analysis of physiological measures.

NSF

NSF CAREER Smart Learning in Multi-person VR

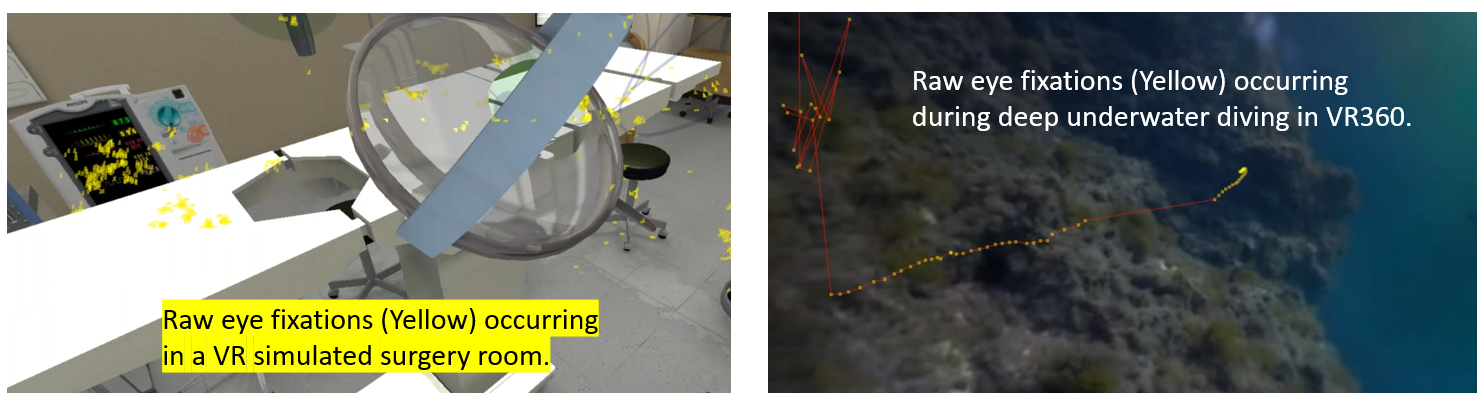

This project studies how physiological measures can be analyzed in near real time to understand learner engagement in fully immersive multi-person virtual reality.

- nsf

- virtual reality

- smart learning

- multimodal

- eye tracking

Project scope

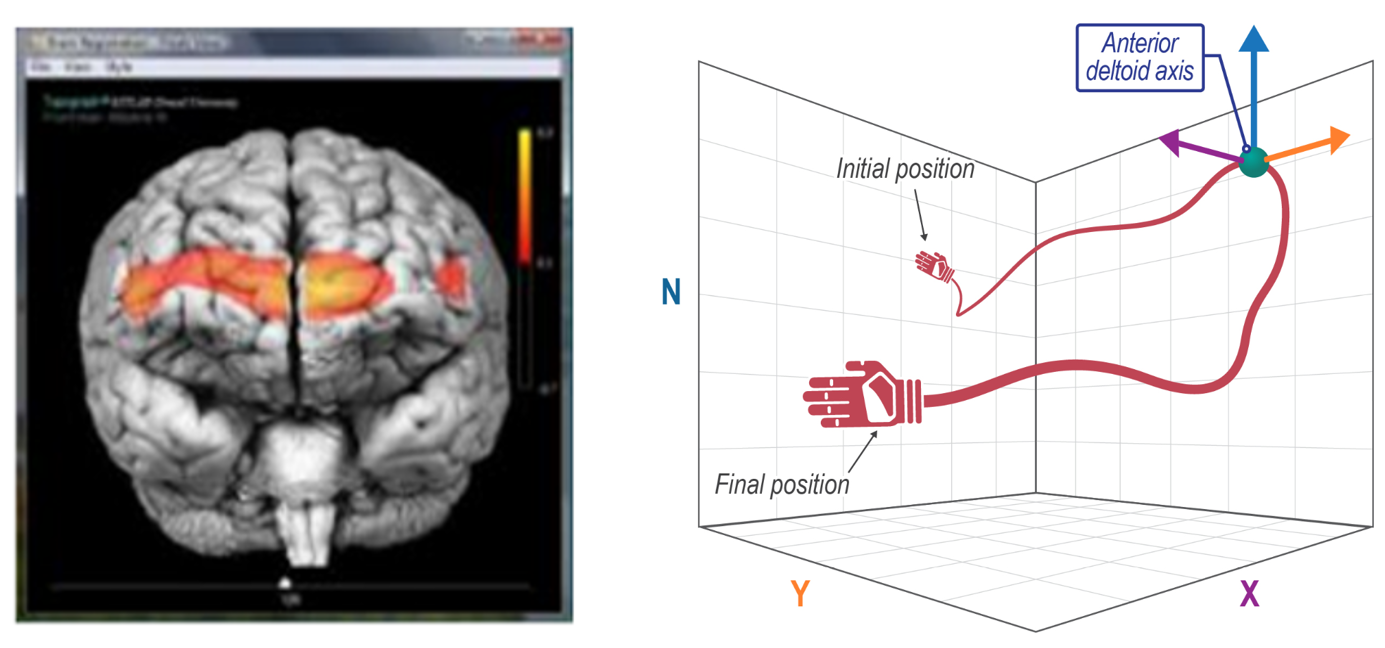

Non-text-based smart learning in immersive multi-person VR using eye movements, brain activities, and haptic interactions.

- Non-text-based smart learning refers to technology-supported learning that uses visualized information and adapts material to individual needs.

- The work focuses on eye movement characteristics, haptic interactions, and brain activities to support prediction and timely scaffolding during learning.

Researchers

- Dr. Ziho Kang

- Ricardo Palma Fraga

- Junehyung Lee

- Collaborators at Drexel University and Kent State University